AI-Driven Intangible Asset Valuation

AI-Driven Intangible Asset Valuation

The intangible property has been a basic challenge to the profession of valuation: intangible assets can be the most valuable part of a business today, and at the same time difficult to accurately determine. A proprietary algorithm, a base of loyal customers, a globally recognised brand, or a pool of patents create real economic value-value that can be readily valued by sophisticated acquirers and investors-but in practice it has been very time-consuming, expert judgment and the availability of incomplete or unintelligible data to quantify that value reliably. The artificial intelligence is transforming this equation in some fundamental ways. The advent of AI intangible asset valuation techniques is not merely doing old things quicker; it is a paradigm shift around what can be analytically feasible, data usable, and conclusions can be drawn and justified.

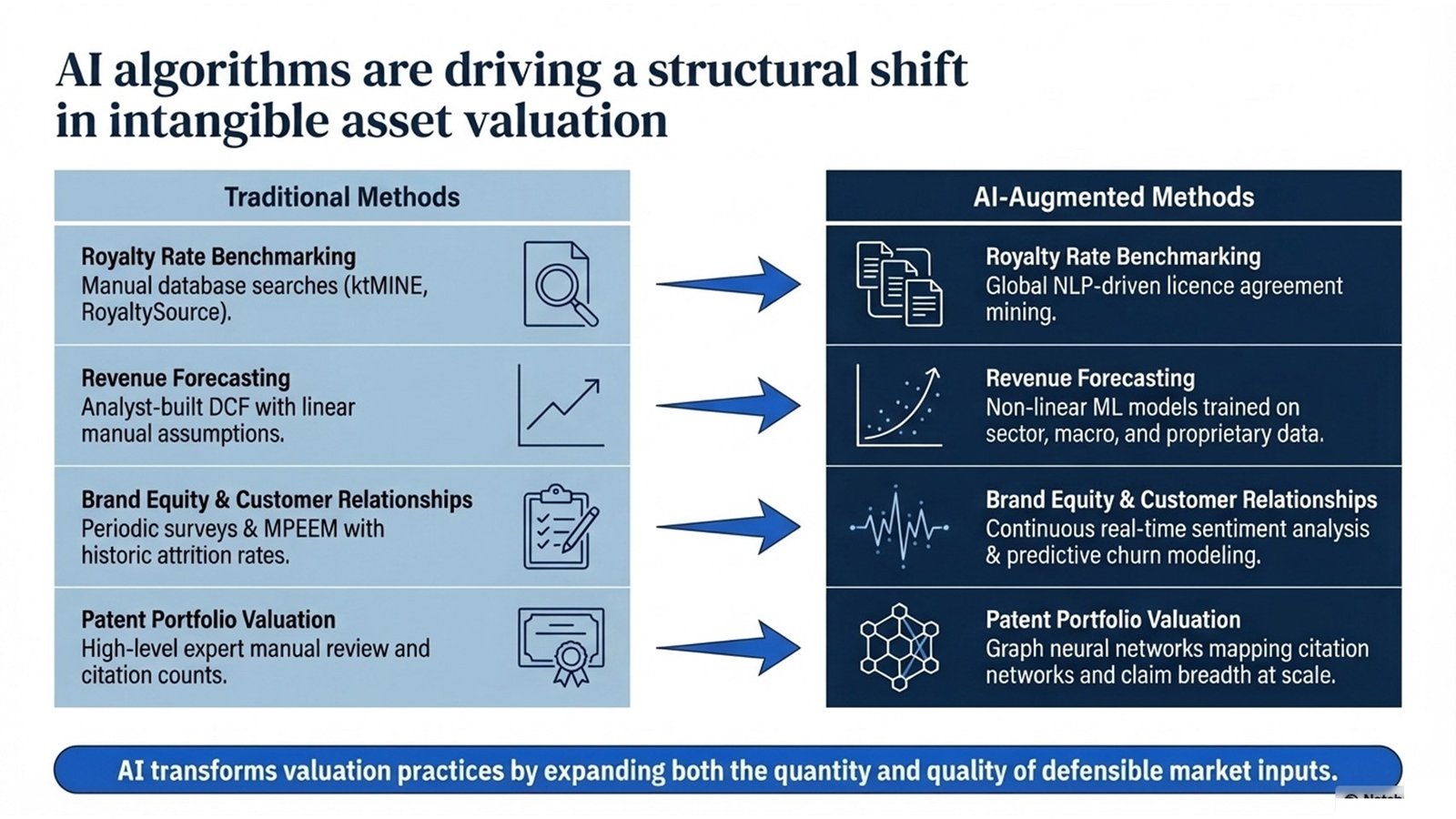

The change can be already observed throughout the valuation profession. More precise and less manual forecasts of cash flows are being done using machine learning models. To extract royalty rate benchmarks previously requiring analysts weeks to manually assemble, natural language processing tools are extracting thousands of licence agreements and M&A filings. Graph neural networks are learning to map patent citation relationships in the scale and depth that would have been impossible to have been learned by a team of humans. Continuous monitoring of brand equity is being facilitated by real-time sentiment analysis as opposed to the periodically and survey-based approximations, which have been the status quo. Simply, AI alters the valuation practices by increasing the number of inputs that may be introduced into a defensible valuation opinion and their quality.

To junior and mid-level professionals who are pursuing a career in corporate finance, M&A advisory, transfer pricing, or forensic accounting, it is no longer an option to understand how AI is transforming the intangible asset valuation, but rather a new standard expected of professionals. The paper will offer a systematic, empirical introduction to the most popular modern intangible asset valuation methods undergoing change under the influence of AI, how AI tools can best be introduced into the process of valuation, and what pitfalls need to be avoided and what can be learned by the early adopters. Cases, process models, and tables of comparisons, permeate the entire text to help bring the discussion to reality.

How Artificial Intelligence is Reshaping Valuation Fundamentals

To see the impact of AI on the valuation of intangible assets, it is useful to begin with the fundamental activities a valuation engagement involves: where to find the assets, where to collect and clean the data, which methodology to use and which methodology to apply, stress-testing and produce a written and defensible opinion. All these tasks have been traditionally labour intensive, judgement based and limited by the access of information by the analyst. The performance of AI is enhancing on all the five dimensions, but to a large extent, it depends on the task, the quality of the data available and the maturity of tools being used.

The enhancement is probably the most impressive at the stage of data-gathering. The big language models and natural language processing systems can now handle thousands of publicly available licence agreements, judicial decisions and regulatory filings as well as M and A disclosures in the time it would take a human analyst to read a few. This is especially useful in benchmarking of royalty rates- one of the most important and traditionally most time consuming inputs in valuation techniques based on income. Probably the best example is commercial tools which used to be the domain of specialist IP analytics companies but now can be accessed in commercial platforms as part of regular valuation processes. The outcome is that AI intangible asset valuation approaches can tap into a much richer and more indicative market evidence than was five years prior.

Machine learning at the modelling stage is enhancing the accuracy of financial predictions- especially in intangible-rich businesses whose revenue patterns are not as consistently predictable as assumed in the more stable pattern of conventional DCF models. Viral growth properties of a technology platform, a pharmaceutical firm in the middle of a patent cliff, or a consumer brand in the aftermath of a reputational crisis all are predicting issues, which the traditional linear-based models would not predict well. Non-linear dynamics can be observed by the ML models trained on industry-specific data, macroeconomic factors, and historical trends of the company itself that human analysts might not observe or even smooth, due to time and cognitive bandwidth. It is a real enhancement of the quality of the modern intangible asset valuation procedures and not a cosmetic one.

Key AI Intangible Asset Valuation Methods in Practice

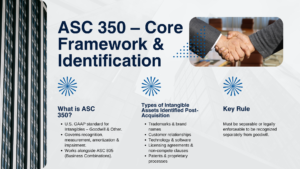

The following table is a comparison of the performance of the specific valuation tasks in the traditional and AI-enhanced approaches. The comparison is to be realistic and open sometimes AI enhancement does not provide an equal boost to all tasks and the extent of boosting is greatly determined by the quality and availability of training data, the level of maturity of the tool chosen, and the ability of the analyst to interpret and validate the results.

| Valuation Task | Traditional Approach | AI-Augmented Approach | Key Improvement |

| Royalty rate benchmarking | Search of a database (ktMINE, RoyaltySource) manually. | NLP-based mining of licence agreements of international filings. | Quickness, area, and comparability. |

| Revenue forecasting | Manually-constructed DCF. | ML models that are sector, macro and proprietary trained. | Minimized anchoring effect; broader information sources. |

| Brand equity measurement | Conducted survey; regular study of consumers. | Sentiment analysis in real-time through social media and review information. | Continuous, high-frequency monitoring |

| Discount rate estimation | Published beta and risk premia CAPM/WACC. | ML peer-group clustering; dynamic betas. | More specific peer choice; prospective contributions. |

| Patent portfolio valuation | Review by experts: number of citations; renewal information. | Graph neural networks predicting citation network and breadth of claims. | Scalability across large portfolios |

| Customer relationship valuation | MPEEM that has historic attrition rates. | CRM based, behavioural-based predictive churn models. | Attractive attrition: Future granularity. |

Table 1: Traditional vs AI-Augmented Approaches Across Core Valuation Tasks

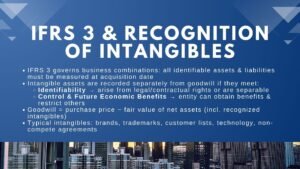

One of the most effective AI intangible asset valuation tools that are currently being used is the use of natural language processing to analyze a portfolio of patents. The PatSnap, Derwent Innovation, and Cipher AI-driven solutions have the ability to analyse the linguistic content of patent claims, map citation networks, discover white-space opportunities, and provide an estimated level of strength the defensive moat of a portfolio – all at the level that is simply unachievable with manual review. To valuation professionals, who are performing purchase price allocations that encompass large patent portfolios, the ability will convert what used to be a highly skilled, expert-opinion based exercise, to one based on systematic, reproducible analysis.

The second field with a great influence of AI is customer relationship valuation. The most widely used method used in the allocation of customer based intangibles in the IFRS 3 purchase price allocations is the multi-period excess earnings method (MPEEM), which needs to estimate the customer attrition rate-the rate at which the customer base purchased is going to be eroded over time. This, traditionally, has been estimated based on historic attrition data which might be inadequate, smoothed, or not representative of post-acquisition conditions. Granular CRM data, transactional behaviour, product usage patterns and customer demographics can be trained on to create forward-looking attrition estimates that are both more accurate and more detailed than their historical-average equivalents, using AI to predict churn. The resulting MPEEM valuations are more indicative of the economic reality of the customer base under acquisition and this is what AI transforms valuation practices is at the level of individual methodology.

Five Key Steps for Integrating AI into Intangible Asset Valuation

There is no easy way of integrating AI tools in a valuation practice through a subscription to a software platform. It needs to be carefully designed, well governed and be ready to invest in technical ability and professional judgment. The figure below illustrates the seven stages of an AI-enhanced valuation engagement, including the scoping stage to the report delivery; the five major disciplines of integration are also mentioned in the paragraphs below.

| Step | Activity | AI Application / Output |

| 1 | Specify scope and types of assets and purpose of valuation. | Scoping of engagement; retrieval and summarisation of previous reports with the help of LLM. |

| 2 | Consume and scrub financial, operational and market data. | Automated data pipelines; filings anomaly detection; OCR filings. |

| 3 | Establish similar transactions and royalty standards. | NLP contract mining; ML-based comparability scoring; database augmentation |

| 4 | Develop and test models of valuation. | ML-optimal revenue predictions; dynamic estimation of the WACC; generation of scenarios. |

| 5 | Run sensitivity, scenario analysis. | Big Data Monte Carlo simulation; Scenario narrative AI-generated narratives. |

| 6 | Check outputs with market data and analysis by experts. | Root cause; auto-back testing and reports available to auditor. |

| 7 | Prepare and write valuation report. | Drafting of reports with the help of LLM; automatic creation of assumptions; creation of an audit trail. |

Process Flow 1: AI-Augmented Intangible Asset Valuation — Step by Step Workflow

The initial field of integration is to align the AI tool with the task and not the other way round. The lure especially among teams that have just embarked on using AI is to use the most advanced tool one has on all the problems. Practically, a big language model is very good at synthesising unstructured text, but is not needed at all in a simple royalty rate search in which a carefully-made database and a human analyst can generate results that are good enough. This is aimed at augmentation of the contemporary intangible asset valuation techniques, not to replace human judgement itself. An objective evaluation of how AI actually enhances the valuation workflow with its tasks and how it is more appropriate to rely on traditional methods is the key to successful integration.

The second science is to spend on data quality prior to sophistication on models. The quality of AI tools is as good as the information that they are trained on or fed with. A machine learning model that has been trained on incomplete, non-formatted or unrepresentative financial data will give the impression that it is accurate, yet unreliable outputs. To roll out any type of AI-based forecasting or benchmarking tool on a live engagement, the team needs to implement strong data ingestion and cleaning procedures and needs to write down the data sources utilized, so that the auditor or customer can evaluate their quality and relevance.

The third one is to ensure that there is explainability. Among the biggest practical issues related to AI-enhanced valuations is the risk of generating outputs, which cannot be fully explained by the valuer and the reviewer. Black-box models, in which inputs are fed in, and outputs are fed out without a visible process of the reasoning that took place in between, pose a problem in a valuation context, where the plausibility of the opinion is measured by how clearly the practitioner can explain and justify all the material assumptions. In the case of AI tools, the valuer should be capable of explaining, in simple language, what the model did, what data it was driven by, what assumptions it made and why the result is sensible. This is not a counsel of perfection, it is a professional practical requirement especially where the valuation will be considered by an auditor, a tax authority or a court.

The fourth field is to test the AI results in relation to independent benchmarks. Royalty rates, attrition rates and growth rates generated by AI, as well as discount rates must be compared with manually generated values, published data, or judgement. When the AI output is materially different than the manual benchmark, then the analyst has to determine the source of said divergence and then make a decision on which to use. The fact that this validation step is explicitly built into the workflow and not the AI output is viewed as a definitive answer, but instead a step in a professional rigorous AI-enhanced valuation approach, is what makes the difference between an AI-enhanced valuation that sounds impressive but is very shallow.

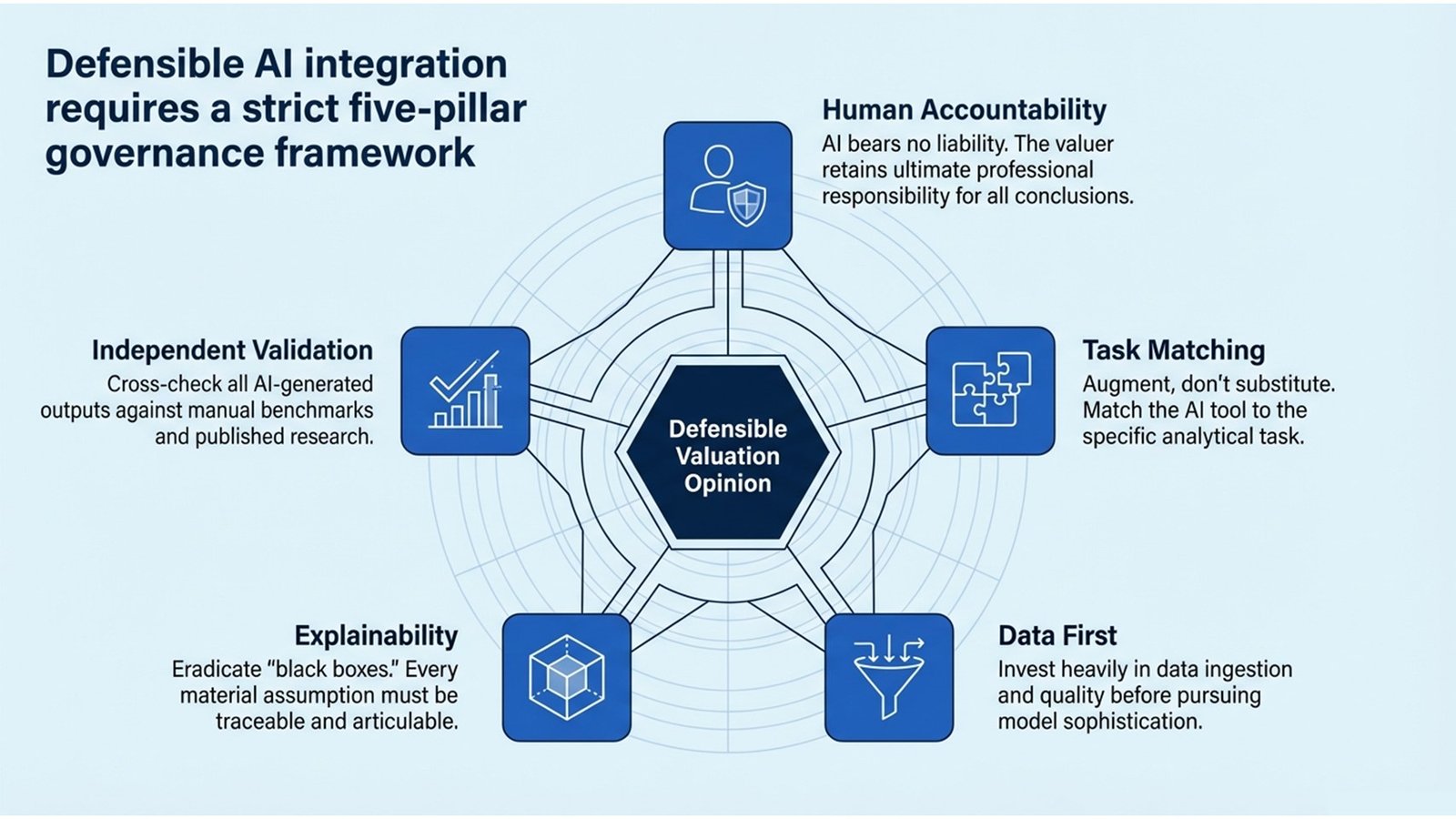

The fifth is to establish governance systems which hold the human professional responsible. There is no professional liability on the part of AI tools; rather it is on the part of the valuer. Companies that incorporate AI intangible asset valuation approaches into their routine must develop easy guidelines; that is, who will review and authorize AI-generated outputs, what paperwork is necessary to prove that a human review was conducted, and how a company will act in case a AI-generated output is discovered to be materially inaccurate. These forms of governance are not some bureaucratic overhead, these are professional infrastructure that makes the adoption of AI credible and sustainable.

Real-World Applications, Challenges, and Lessons Learned

The true potential and the actual constraints of AI on intangible asset valuation are demonstrated in a number of early-adopter cases throughout the valuation and advisory industry. A prime example of one of the most instructive is the application of an NLP-driven contract analysis platform by a global Big Four advisory firm in a massive purchase price allocation of buying a software company in Europe. The target possessed a substantial body of software licence agreements with large enterprise customers in various jurisdictions – a total of several hundred contracts, on a variety of product lines, geographies, and term structures. In the past, the team would have made use of a sample review of the most material contracts and extended the assumptions to the rest. The team could review all contracts in the population within a fraction of the time using an AI contract review tool, extracting key commercial terms, and identifying outliers, and giving a much more accurate estimate of the customer relationship intangible compared to a sample-based method would have allowed. The moral of the story was that in case of large valuations, which document-heavy, AI-driven contract analysis is not a luxury but a significant enhancement of the quality of analysis. This is a direct demonstration of how AI transforms valuation practices of high data-volume, time-pressured M&A.

The second example is of a specialist IP valuation boutique, which used machine learning models to value a drug pipeline of a pharmaceutical company during a cross-border licensing negotiation. Predicting the probability-weighted revenue of every asset within the pipeline involved a team to use an ensemble of ML models that were trained on data on the historical success of clinical trials, regulatory approval rates, and peak sales of similar compounds. These outputs were then introduced to a risk-adjusted NPV (rNPV) model, which is one of the fundamental modern intangible asset valuation methods of pharmaceutical IP, to generate fair value approximates of every compound. The ML-augmented process, as compared to the expert-judgement-based process the boutique had followed before, provided more consistent results among analysts and reduced the time needed to complete revenue modelling by about forty percent, as well as yielding a more useful sensitivity analysis that the client found more useful as a negotiation aid. The issue it faced was one of model interpretability: the board of the client needed a clear demonstration of how the ML projections were made before they could accept them as a starting point to negotiation, so the team had to do a lot of work to demonstrate that.

The second process flow table below offers a viable guide that teams aiming to use AI tools in an already established valuation practice should consider by taking into consideration the governance and risk management implications that the cases above bring.

| Phase | Team Actions | Risks and Safeguards |

| Assessment | Review existing processes; determine the most repetitive processes that can be automated with AI. | Automation of tasks that need subtle decisions should not be automated before AI literacy is attained. |

| Tool Selection | Compare AI platforms to use cases of valuation; controlled pilots. | Avoid vendor lock-in; verify data provenance and model explainability |

| Integration | Incorporate AI results into existing model templates; create human-inspection checkpoints. | Parallel manual review during first 3 -6 months; record deviations. |

| Governance | Establish model validation guidelines; create responsibility to AI-generated outputs. | Make sure valuer is answerable on conclusions; incorporate clarification in reports. |

| Continuous Improvement | Re-train models using new transaction data; monitor quality of output versus the results of audit. | Periodically review model; revise protocols with AI standards changes. |

Process Flow 2: The Introduction of AI in a Valuation Team – Stages, Activities, and Protection

The third example is a forensic accounting example that exemplifies the shortcomings of AI in the valuation as well as the prior examples demonstrate the potential. An AI-based financial modelling tool was utilized by a litigation support team to make an estimate of the value of a customer list during a trade secrets case. The tool produced a revenue forecast and attrition schedule that was technically advanced, but when cross-examined with the expert of the opposing party, it was revealed that the model was trained on data of a different industry with significantly different churn characteristics. The tribunal subsequently put the output into challenge and reduced its weight. The moral, that AI outputs are as plausible as the data and assumptions on which they are based, reinforces the significance of the discipline of validation mentioned above. When it comes to the use of AI as an intangible asset valuation tool, it does not matter how sophisticated the tool is, rigour is required.

Tools, Maturity, and the Professional Development Imperative

The AI tool ecosystem that can be used to apply modern approaches to valuing intangible assets is growing at a very fast rate, and even the rate of change implies that any particular tool suggestion can become outdated in a year. More important to professionals who develop long-term capability is how to apply AI based on different categories of use that are pertinent to valuation, the quality indicators of a tool that is actually robust and not a shallowly impressive tool, and the data needs that would define whether a particular tool can actually be used in a particular engagement situation. The following table represents a sample of the existing tool space based on the use case of valuation, and is not meant to be a comprehensive directory, but as a practical guide.

| Use Case | Representative Tools / Techniques | Data Required | Maturity Level |

| IP and patent analytics. | Cipher, Derwent Innovation, PatSnap AI | Filing of patents, claims, citation graphs. | High |

| Brand sentiment tracking | Brandwatch, Sprinklr, custom NLP pipelines | Social media, reviews, news feeds | High |

| Comparable transaction search | Kira Systems, Luminance, LLM-based review of contracts. | M&A filings, licence agreements, court records | Moderate–High |

| Financial forecasting | XGBoost, prophet, LSTM neural networks. | Past financial, macro data, industry data. | Moderate |

| People / human capital analytics. | Visier, Crunchbase talent information, LinkedIn APIs. | HR records, attrition, compensation benchmarks | Moderate |

| ESG intangible scoring | MSCI ESG Analytics, Refinitiv AI ESG, custom models | Sustainability reports, regulatory filings, news | Emerging |

Table 2: AI Tools and Platforms by Use Case of Valuation.

The maturity scores used in the table show how much AI applications in each field have been proven through its general usage by professionals, published scientific studies, and acceptance by regulatory bodies. Applications with high-maturity such as patent analytics, brand sentiment tracking, etc. are based on a track record of over a few years, developed vendor ecosystems, and an accumulating body of case precedent that can be tapped by valuers. Such emerging uses as AI-based ESG intangible scoring are technically viable, but await the establishment of the data standards, methodological norms and regulatory acceptance that would be required to be used in a broad range of applications in formal valuation opinions. Knowing where a particular tool would fall on this maturity spectrum would be a significant element of responsible professional judgement of when and how to use it.

To professionals who want to acquire true competence in the valuation of intangible assets with the help of AI, the professional development imperative is evident. Fluency with AI tools is helpful, but should be formed on top of good conventional valuation skills. A practitioner with a profound understanding of how the relief-from-royalty method operates, the motivation behind the choice of royalty rates, and how to build and stress-test an income-based model is much better placed to responsibly use AI tools than one who knows how to operate the tools, but lacks the conceptual understanding of how to realise that their outputs are unreliable. The best professional development experience involves both formal education in contemporary techniques of valuing intangible assets using modern techniques and real-world experience with AI tools in a supervised, real-world experience of developing expertise in any technical field.

Conclusion: Actionable Insights for Professionals

Artificial intelligence adoption of intangible asset valuation is not a vision of the future, rather a current reality that is transforming the nature of scoping engagements, data collection, model construction, and conclusion presentation and justification. The main idea of the article is that AI changes the valuation practices not by eliminating the professional judgement but by expanding what the latter may be applicable to: bigger amounts of data, more complicated analyses, bigger asset portfolios, and shorter turnaround times. By being aware of both the opportunities and the constraints of AI in this regard, professionals will be better placed to value addition to clients, employers and the markets they serve. The practical priorities can be summarised as the following actionable insights.

Establish your traditional basis of valuation, then AI fluency. The biggest error that most professionals may make in their rush to embrace AI intangible asset valuation techniques is to think that AI will be their way of avoiding the challenging conceptual labor of learning how to value things, accounting principles, and economics. It is not. AI tools enhance the abilities of a competent analyst; they are not used to substitute incompetence in analysis. Learn the mechanics of the income, cost and market approaches, and how to get the results they do, and then overlay AI tools over it.

Make explainability top of your agenda in all the outputs you create with AI. The valuation opinion may be in the form of an audit review of a purchase price allocation, a transfer pricing posture by a tax authority, or a client board review of a strategic transaction; however, in each case, credibility of your opinion will depend on how well you can explain it and exhaustively. Architect a workflow with AI enhancements in such a way that all material inputs, assumptions and outputs are traceable, explained, and justified, not only in the model but also in the story of the report.

Keep abreast of changes in the standards of AI application in professional valuation situations. The major standard setters, such as the International Valuation Standards Council (IVSC), the American Society of Appraisers and the Royal Institution of Chartered Surveyors have all started to talk about AI governance in their technical guidance. Regulatory authorities in key jurisdictions are also starting to express the expectation of the use of AI-generated outputs in financial reporting, tax and litigation cases. By monitoring such developments, professionals will be in a better position to indicate how such modern intangible asset valuation techniques will be anticipated to develop and how their practice can be modified accordingly.

Discuss with colleagues and the wider professional community the issue of AI adoption. The issues about AI governance and data quality, model explainability, and professional liability in valuation are really hard, and there is no single practitioner and company that has all these questions answered. Experience-sharing, specialty working groups, and forums on continuing education are good venues on which to exchange experience, compare practices, and collaboratively create the norms that will regulate responsible use of AI in the valuation profession.

Last but not least, be disciplinedly optimistic towards approach AI adoption. The real and significant improvements that AI intangible asset valuation methods can offer, both in terms of quickness, reach, consistency and the level of analysis, are real and significant. Are the hazards of poorly regulated, under-validated, or over-confident AI-generated outputs, as well. Professionals that flourish in such a world will be those who view AI as a potent resource that should be employed critically and rationally, and not a silver bullet that allows the professionals to do the job of professional valuation without the need to work hard. The strenuous effort is the same; AI only makes available to do it well what is possible given the time and resources they have.